Until recently, Moore’s Law allowed developers to speed up their applications with little effort. With the creation of multicore processors, developers now need to parallelize their applications to get the same performance improvements. One option is to use CUDA – a parallel computing platform and programming model developed by NVIDIA. It is designed specifically for general computing on graphical processing units (GPUs). With CUDA, developers are able to dramatically speed up computing applications by harnessing the power of GPUs.

Exposing Parallelism: Rewrite or Refactor?

In some cases, it is straightforward for CUDA to expose the parallelism in your code. One case is when the majority of the total running time of the application is spent in a few isolated portions of the code. A more difficult case is when the total running time is spread out evenly across a wide portion of the source code. This is difficult because it requires changing/refactoring many lines of code or rewriting the entire application from scratch both of which can be a very error-prone process. Fortunately, refactoring solutions (like Lattix Architect) can help reduce the analysis and code change impact.

Rewriting your application might seem like the easiest solution from a technical perspective to parallelize your code. This approach carries enormous business risk. Therefore, most organizations prefer an incremental refactoring approach. This allows you to still have working, deployable software that can be delivered to customers and prospects. When done right, refactoring can also be a more cost-effective solution compared with a total rewrite.

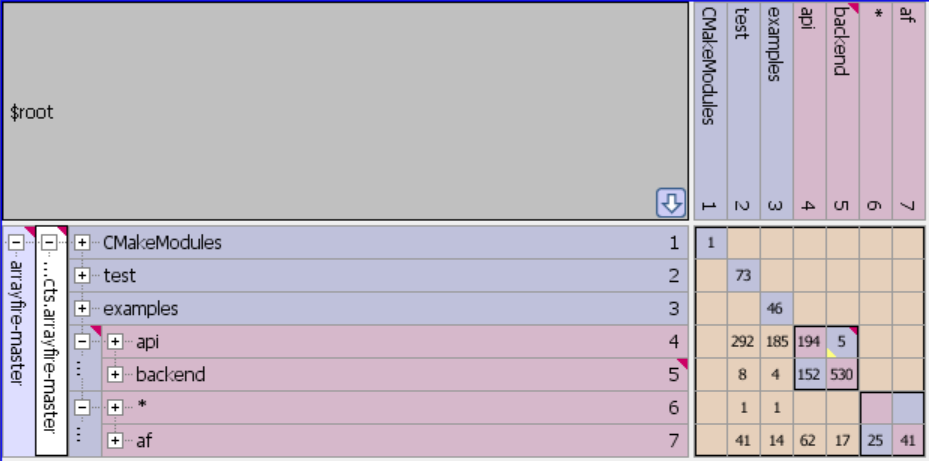

The incremental refactoring approach is still risky. Anytime that you change many lines of code you increase the likelihood that you introduce defects. Lattix can help you understand how and why various parts of your code are interconnected. Allow you to model your refactoring first using “what-if” analysis to come up with the optimized solution. And control the updated design so that it does not degrade over time. Here is ArrayFire, a high performance CUDA software library for parallel computing in the Lattix DSM.

As a result, Lattix can reduce the risk of incrementally parallelizing parts of the code. Here are some of the specific ways Lattix can help:

- Understand what will be shared: Many applications share common platform libraries. Lattix can help identify the utilities that are commonly used across your application. If any of these libraries are performance bottlenecks, they are good candidates for parallelization.

- Understand the impact of change: By finding the dependencies on “hotspots” or areas of your code that your profiler says are bottlenecks. You can prioritize your list of candidates for parallelization by how risky it is to change that code. How much impact does this change have on the rest of the system? Will it require extra testing/code reviews?

- Manage the evolution: With an incremental refactoring approach, there are still business needs (bug fixes, add new functionality, etc). With Lattix, you can refactor and do your daily work at the same time. You can see and update the new architecture in the current implementation and see how the new architecture is affected by the changes. This is made easy by integrating Lattix into your DevOps/CI pipeline.

Lattix Can Help

Lattix can help you refactor your application to expose parallelism. This improves performance but also improves maintainability and software quality. Parallelizing your application does not have to be a risky, error-prone process that requires a massive re-write. Instead, it can be accomplished in an orderly and incremental way that minimizes risk while bringing the benefits of parallelism.